Seedance 2.0

Create cinematic AI videos with the Seedance 2.0 Video Generator on OpenArt. Click the button below to bring your ideas to life with powerful multi-modal control and native audio generation.

Examples

Multi-Modal Support

Seedance 2.0 combines text, images, videos, and audio to guide your video across multiple scenes. You can upload up to 9 images, 3 audio, and 3 videos (15s total) as a reference to create cohesive and visually consistent videos that match your creative vision.

Try it NowDirector-Level Camera Control

You can use Seedance 2.0 for full control over lighting, shadows, and performance, while handling advanced camera moves like tracking, dolly, POV, and rack focus shots. Provide a reference video, and the Seedance 2.0 AI video model will reproduce it with cinematic precision.

Try it NowBuilt-in Audio Generation And Lip-Sync

Seedance 2.0 creates audio alongside your video to produce clear dialogue, synchronized sound effects, and immersive music automatically. Context-aware audio and precise lip-sync ensure audio matches the action and timing in your video, removing the need for extra post-production.

Try it NowHow To Use Seedance 2.0 Al Video Model Generator

Turn your ideas into stunning AI videos using ByteDance Seedance 2.0 in just five simple steps.

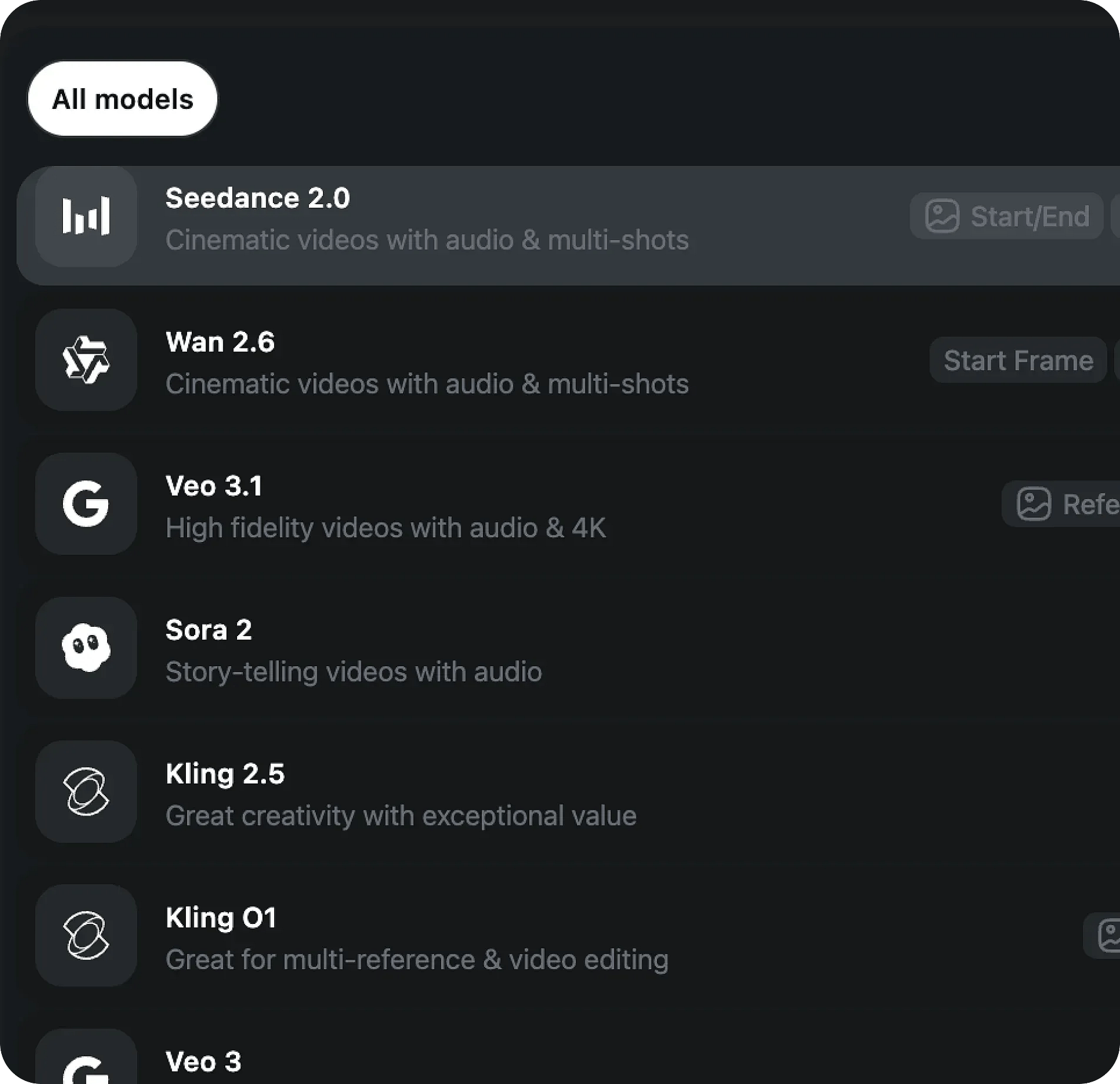

Pick the model

To get started, select the Seedance 2.0 Al video model as your starting point.

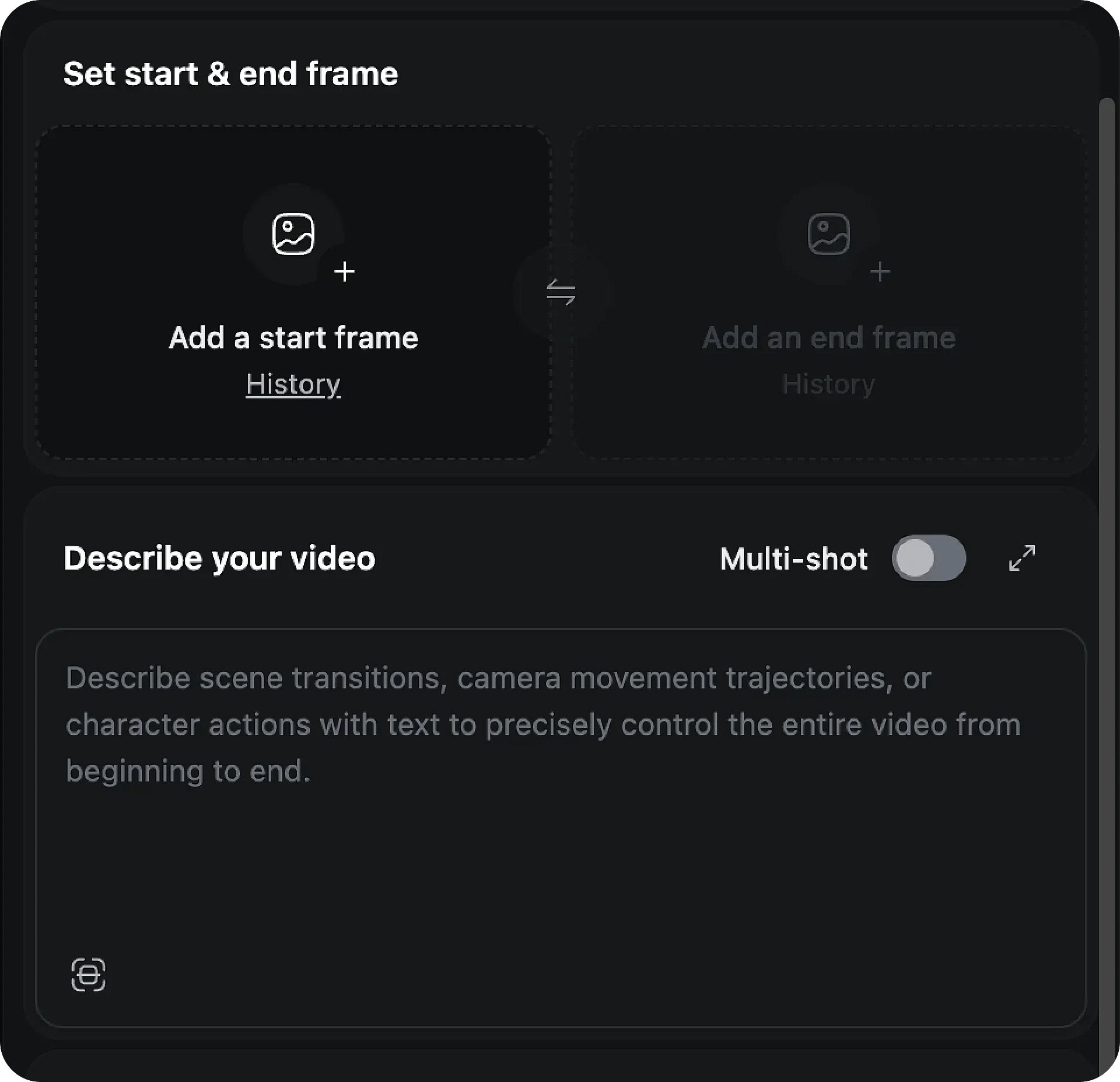

Prompt/Add Image

Enter a detailed prompt or upload a reference image, video, or audio file to define the style, mood, and visuals of your project. You can upload up to 12 files across different formats to guide the output.

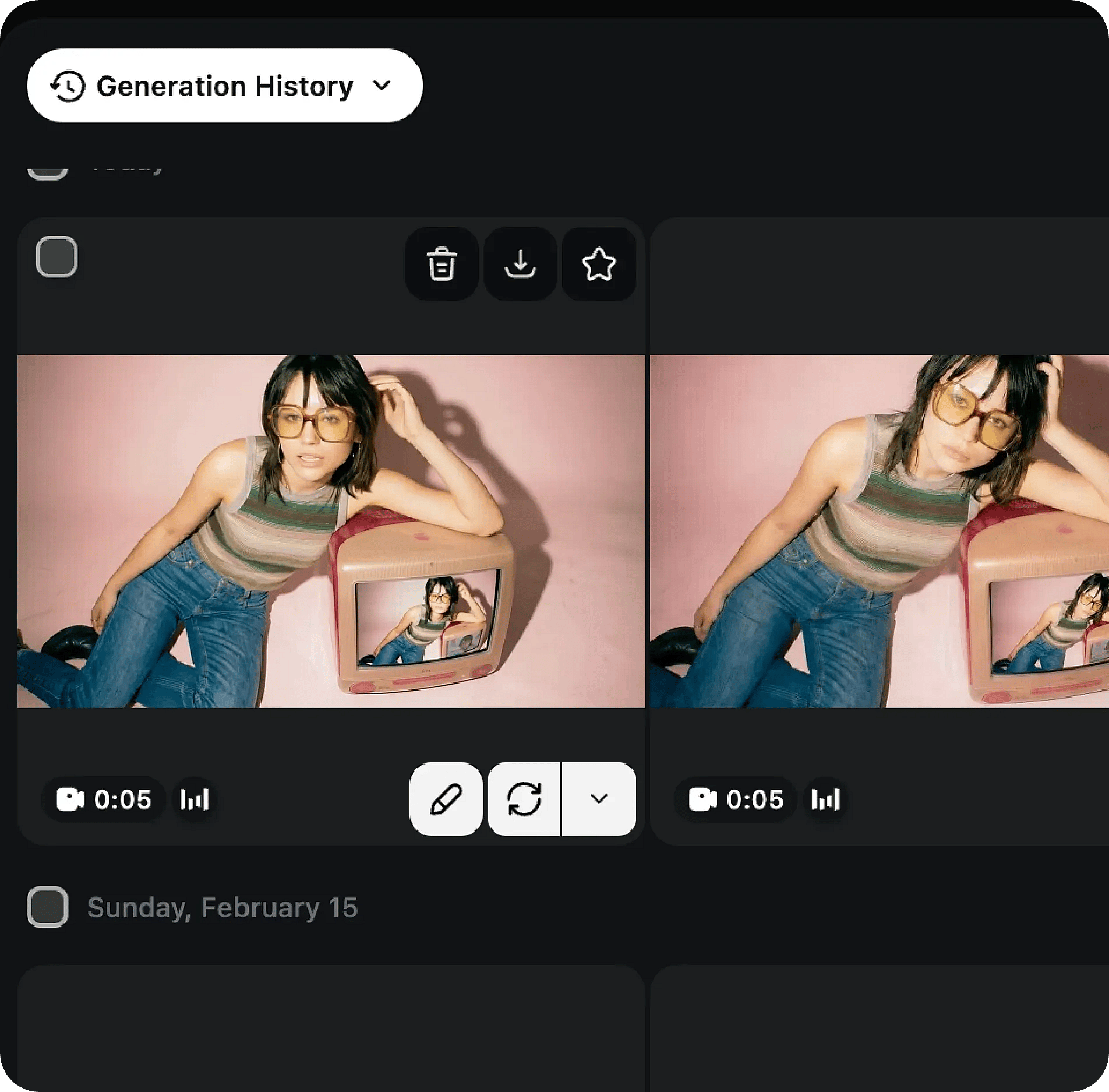

Generate and adjust

Review the generated results and adjust your prompt and style to align with your vision.

How To Get The Best Results With Seedance 2.0

Define the theme

Start by choosing the main subject or concept for your video. Clear direction helps Seedance 2.0 AI video model understand what to focus on.

Experiment with styles

Test different visual styles, moods, and settings. Include references from images, videos, and audio to shape the look and feel of your video.

Consider combinations

Provide visual details like colors, lighting, textures, and effects, and combine multi-modal inputs to give the Seedance 2.0 Video Generator a better cinematic context.

Integrate elements

Mix images, video clips, and audio to guide motion, camera angles, characters, and scenes for a cohesive output.

Embrace originality

Utilize tools like Auto Enhance to refine your prompt and improve the accuracy of your AI video.

Patience and iteration

Make small adjustments to your prompts, inputs, and references across multiple generations until the video aligns with your vision.

Frequently Asked Questions

Loved by Creators

I've tried many AI platforms... but OpenArt is still the best for character creation, image-to-video quality, and the variety of models available.

OpenArt offers an excellent array of AI tools... once you learn the features, the creative possibilities are amazing - and their support team is outstanding.

OpenArt is a very versatile AI platform with many image and video models, consistent character creation, and powerful editing tools.

Helpful AI tools all in one place - image and video generation, editing, and more... no need for multiple subscriptions.

Customer service is always quick and helpful... and the platform keeps getting better with new features and tools.

I've tried many AI platforms... but OpenArt is still the best for character creation, image-to-video quality, and the variety of models available.

OpenArt offers an excellent array of AI tools... once you learn the features, the creative possibilities are amazing - and their support team is outstanding.

OpenArt is a very versatile AI platform with many image and video models, consistent character creation, and powerful editing tools.

Helpful AI tools all in one place - image and video generation, editing, and more... no need for multiple subscriptions.

Customer service is always quick and helpful... and the platform keeps getting better with new features and tools.

I've tried many AI platforms... but OpenArt is still the best for character creation, image-to-video quality, and the variety of models available.

OpenArt offers an excellent array of AI tools... once you learn the features, the creative possibilities are amazing - and their support team is outstanding.

OpenArt is a very versatile AI platform with many image and video models, consistent character creation, and powerful editing tools.

Helpful AI tools all in one place - image and video generation, editing, and more... no need for multiple subscriptions.

Customer service is always quick and helpful... and the platform keeps getting better with new features and tools.

With so many AI platforms out there, OpenArt stood out as a stellar choice - I'd recommend it to any creator.

This platform includes everything you need for AI content creation - images, video, characters, audio, and multiple AI models in one place.

OpenArt is pushing the boundaries of AI video creation... and it's clear the team is committed to improving the creator experience.

I made my first AI music video with OpenArt... and their support team was the best help I've ever experienced.

I'm very happy with the support - my issue was resolved quickly and the team made the process easy.

With so many AI platforms out there, OpenArt stood out as a stellar choice - I'd recommend it to any creator.

This platform includes everything you need for AI content creation - images, video, characters, audio, and multiple AI models in one place.

OpenArt is pushing the boundaries of AI video creation... and it's clear the team is committed to improving the creator experience.

I made my first AI music video with OpenArt... and their support team was the best help I've ever experienced.

I'm very happy with the support - my issue was resolved quickly and the team made the process easy.

With so many AI platforms out there, OpenArt stood out as a stellar choice - I'd recommend it to any creator.

This platform includes everything you need for AI content creation - images, video, characters, audio, and multiple AI models in one place.

OpenArt is pushing the boundaries of AI video creation... and it's clear the team is committed to improving the creator experience.

I made my first AI music video with OpenArt... and their support team was the best help I've ever experienced.

I'm very happy with the support - my issue was resolved quickly and the team made the process easy.

Create without limits

Join millions of creators using OpenArt to generate images, videos, characters, and stories - all in one platform.

Get Started for Free →