In 1859, the poet Charles Baudelaire wrote one of the most famous takedowns in the history of art criticism. His target was photography. He called it "art's most mortal enemy" and dismissed it as the refuge of every failed painter too untalented to pick up a real brush. Photography, in his view, was a machine pretending to do the work of an artist. It required no imagination, no skill, and no creative vision. It was purely mechanical, and therefore purely soulless. And yet Dorothea Lange's Migrant Mother helped mobilize federal food aid during the Great Depression, the Apollo 8 crew's Earthrise photograph reshaped how an entire generation understood our planet, and photography went on to become one of the most important creative forms humans have ever developed.

A little over a century later, synthesizers got the same treatment. When synth-pop began filling the charts in the late 1970s and early 1980s, the backlash was immediate and visceral. Gary Numan described the "hostility" he faced from critics who didn't think electronic music qualified as real music, because they believed the machines were doing the work. OMD frontman Andy McCluskey put it plainly: there were a great many people who genuinely thought the equipment wrote the song for you. Queen printed "No Synthesizers!" on four consecutive album sleeves in the 1970s, as if to reassure listeners that what they were hearing was still legitimate. And by 1982, the UK Musicians' Union had voted to ban synthesizers and drum machines outright, convinced that electronic instruments would make human players obsolete. And yet today, electronic music is one of the most listened-to genres on earth, and synthesizers are so deeply embedded in modern production that most pop songs wouldn't exist without them.

These critiques probably sound eerily familiar. If you swapped "photography" or "synthesizer" for "AI art," you could find nearly identical arguments posted in comment sections and published in think pieces today, because while the tools change, the anxiety clearly doesn't.

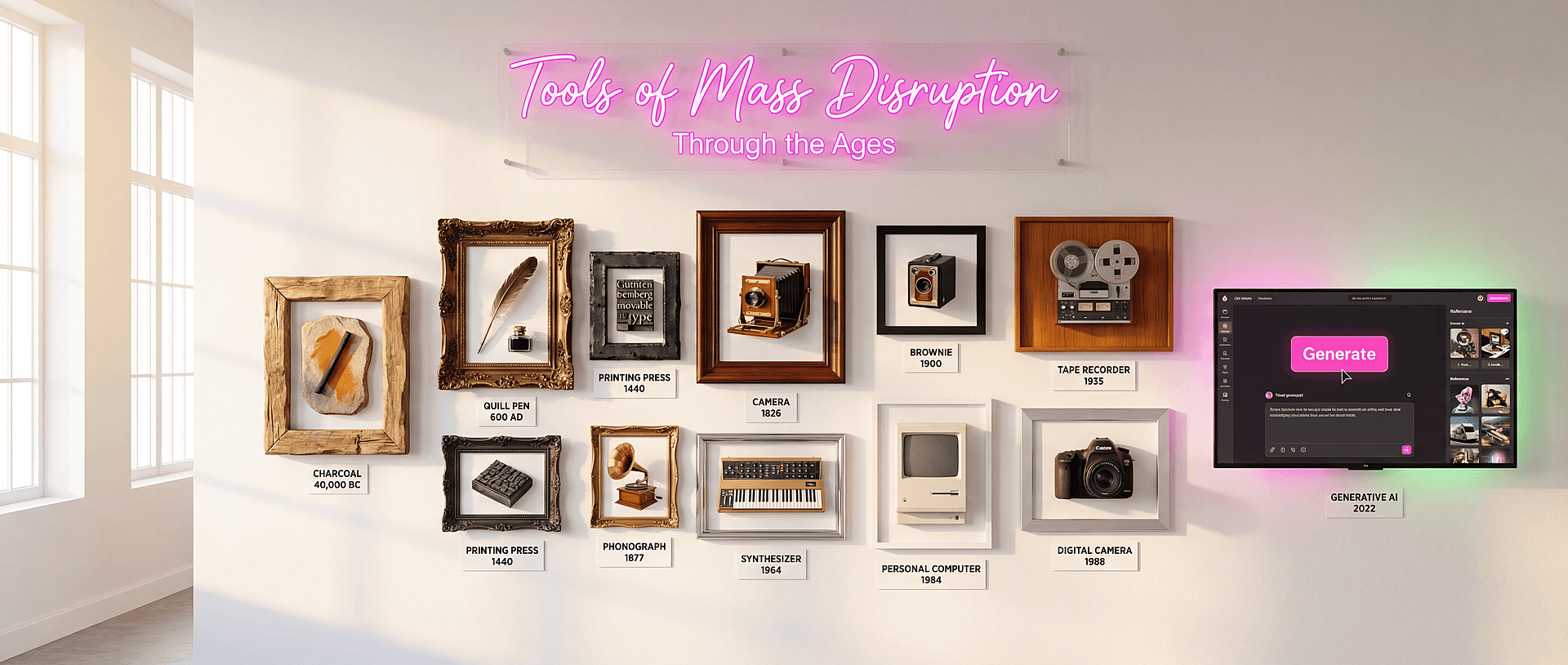

We are, as a species, compulsively creative. We build tools, we extend our capabilities, and then we spend a generation or two being deeply unsettled by what we've built before finally absorbing it into the fabric of normal life. Cities once felt like an affront to the natural world. The printing press was going to destroy the memory of scholars. Recorded music was supposed to end live performance. The internet was going to rot our brains (jury's still out on this one). But in each of these cases, the tool eventually became so thoroughly woven into human culture that we stopped seeing it as foreign and started seeing it as ours.

The conversation around "AI art" is deep in the fear phase right now, and the label itself is part of what's keeping it there.

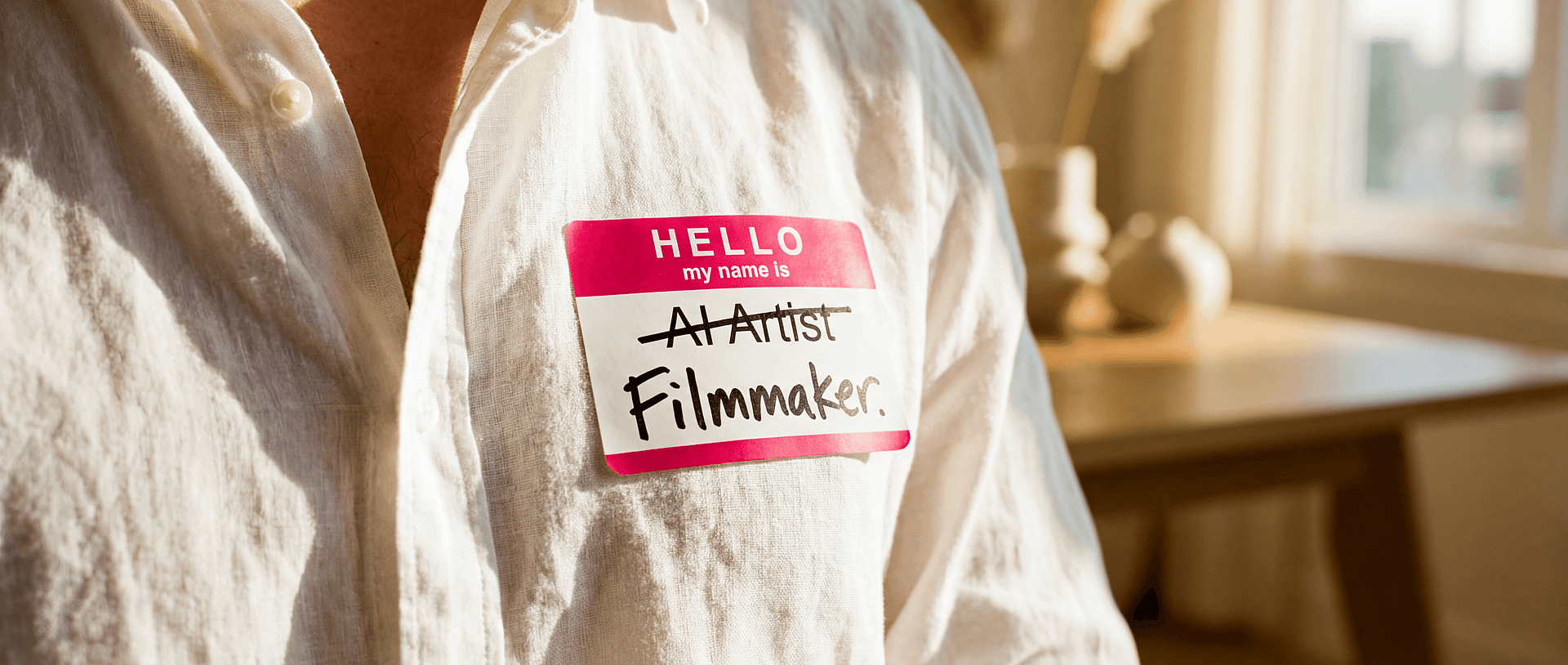

When you call something "AI art," you decenter the human artist

A photographer isn't a "camera artist." A filmmaker isn't a "lens storyteller." A graphic designer isn't a "software illustrator." We don't name creative practitioners by their tools, because the tool isn't what makes the work meaningful or distinctive as an end result. The creative vision behind it is what does that.

When you call something "AI art," you put the tool in the spotlight and push the human right off the stage. Every conversation that follows naturally orbits around the software: Is the AI creative? Does it deserve credit? Is it really art if a human didn't make every mark? These are interesting philosophical questions, but they're almost entirely beside the point for the millions of people using generative tools to do real creative work every single day.

The label also flattens an enormous range of creative practices into one reductive term. Consider what "AI art" is currently being asked to cover: a brand creative director prototyping visual directions for a campaign, a solo illustrator building a consistent fantasy world they couldn't afford to render any other way, a filmmaker generating storyboard imagery at speed, a fashion designer exploring colorways in real time, a small business founder who finally has a way to make content that feels like their brand instead of like stock footage. These are wildly different practices with different goals, different skill sets, and different outputs. The only thing they share is one piece of the pipeline, and that shared tool has somehow become the defining characteristic of all of them, which would be a bit like calling every piece of writing created in Google Docs "software literature."

Even the art world can't agree on what the term means. A recent Artsy survey of more than 300 gallery professionals found three competing definitions in active use: some define "AI art" as fully prompt-based work, others focus on artistic intent regardless of tool, and others classify anything where AI meaningfully shapes the outcome. The survey's own conclusion was telling: these debates about legitimacy and value are happening without a common vocabulary. Professionals in the same field are using the same term to describe fundamentally different things, and then arguing about whether those different things have merit.

The legal world is starting to weigh in

The court of cultural opinion tends to dominate these conversations, but it's worth paying attention to what's happening in actual courts. Because when the legal system starts hearing cases and making room for how a new technology is actually being used, that's historically one of the clearest signs that it's here to stay and that culture will eventually follow. It always starts with a few brave people who just want to create, and little by little their work becomes recognized not just in law but in our colloquial way of life.

On March 2, 2026, the U.S. Supreme Court declined to hear Thaler v. Perlmutter, the decade-long case about whether AI can be an "author" under copyright law. The ruling was clear: copyright requires human authorship. Works created autonomously by AI, with no human creative contribution, cannot be copyrighted. But the court left room for works where humans maintain genuine creative control through direction, prompting, and alteration. In other words, the legal system already distinguishes between "the AI made this" and "a human made this using AI." When we call something "AI art," we linguistically frame it as the first category, even when the reality is almost always the second.

So what should we call it?

Probably the simplest thing: call it what it produces. Illustration. Concept art. Brand imagery. Motion graphics. Visual design. Storyboards. Campaign photography. The medium is the output, not the pipeline that created it, and nobody asks a photographer whether their image was shot on a Canon or a Hasselblad before deciding what to call it.

Or, who knows, maybe it will be an entirely new word that doesn't exist yet, one we'll all get to help shape. Birthing lexicon to swaddle our newborn creative tools is its own kind of hard work.

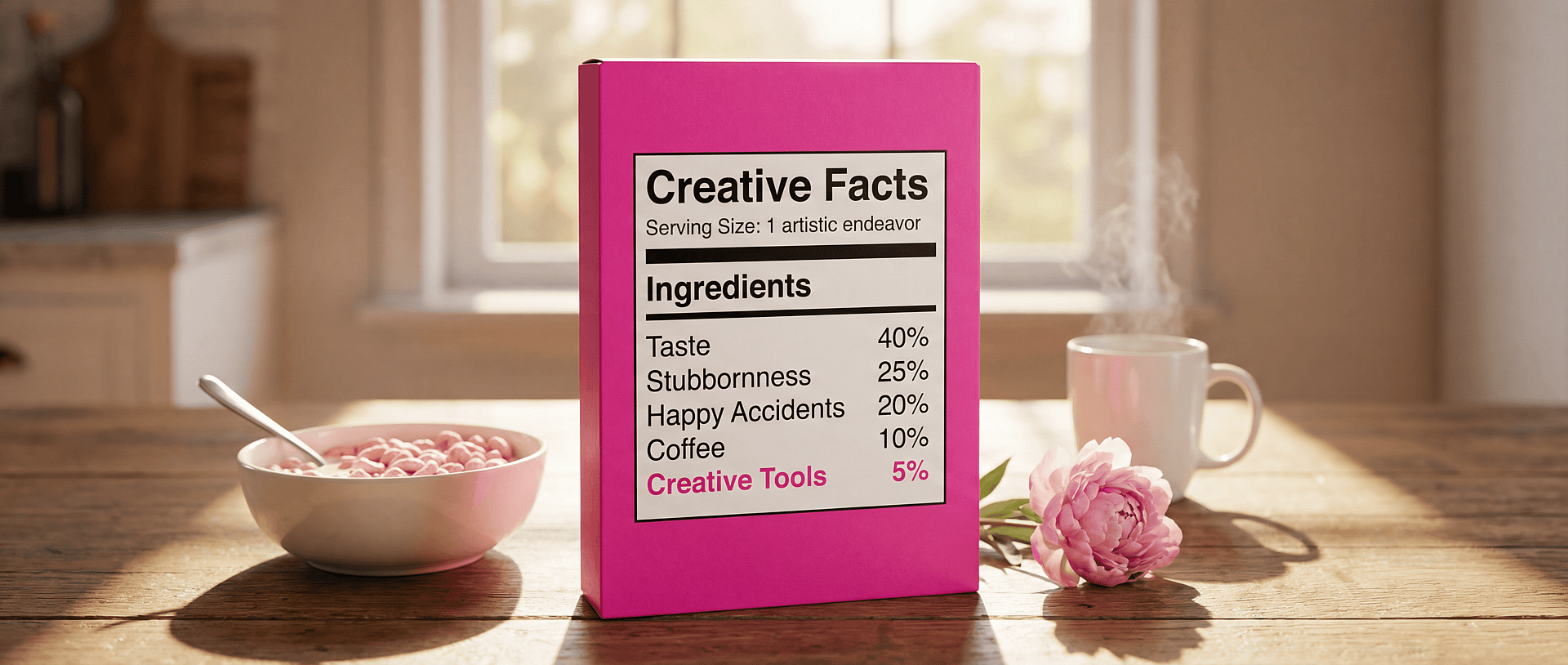

What we should probably stop doing is defaulting to a term that makes the AI the author and the human the bystander. Because in every studio, brand team, and creative workflow where these tools are actually being used, that's not what's happening. What's happening is that people are making decisions, directing, editing, curating, and rejecting. They're exercising taste and doing the work that has always made creative work creative, just with a new instrument in their hands.

And if you're wondering whether this shift is already happening in practice, it is.

Positive signals

We know that it can feel like there's a lot of shade being thrown at creators who use these tools right now, and that it's hard to tell how far we really are from a cultural shift where this kind of work is simply accepted for what it is. But the positive signals are already there. Every generation's technological revolution has its naysayers, but there are also always the Gary Numans and Andy McCluskeys of the world, already jamming out on their synths and pioneering entirely new sounds before the rest of the culture catches up. The same thing is happening right now with GenAI.

Paul McCartney used AI to extract John Lennon's voice from a decades-old demo tape to complete "Now and Then," the final Beatles song. Nobody calls it an "AI song." They call it a Beatles song, because the AI was the instrument and the music was what mattered.

Grimes has built an entire creative infrastructure around AI, from her Elf.Tech voice model that lets fans create music using her AI-generated voice to visual art sold at Christie's. She doesn't call herself an "AI artist." She's an artist who uses AI, among many other tools, to realize a vision that spans music, visual art, and technology.

And when The Brutalist won multiple Oscars in 2025, including Best Actor for Adrien Brody, it did so after using AI to refine the Hungarian accents that Brody and Felicity Jones had spent months learning with a dialect coach. The technology polished specific vowels and sounds in post-production to preserve the authenticity of performances that were, in every meaningful sense, the actors' own. Nobody calls The Brutalist an "AI film." It's a film, and the AI was one small, invisible part of how it got made.

How we think about this at OpenArt

When we talk about the people who use OpenArt, we don't call them "AI artists." We call them creators, storytellers, filmmakers, founders. When we describe what the platform does, we don't say "AI generates images for you." We say "bring your characters, stories, brands, and worlds to life." That's not a branding exercise. It's a reflection of something we genuinely believe, informed by the same history this piece has been tracing. (In fact, every image in this post was made with generative tools on OpenArt.)

The pattern tells us that 1. the fear is real, 2. it's fundamentally human, and 3. this too shall pass. It tells us that the tools always get absorbed into the larger story of how people make things. And it tells us that the most useful thing we can do right now is build tools that stay out of the way and let creators focus on what they're making, and why.

There is something genuinely beautiful about the fact that we keep finding ourselves here, in yet another moment where humans have built something new and are arguing about whether it counts. Hundreds of years after Baudelaire tried to shut photography down and decades after the Musicians' Union tried to ban the synthesizer, we're still inventing new ways to create and share with each other, and no amount of early-stage resistance has ever managed to stop that from happening.