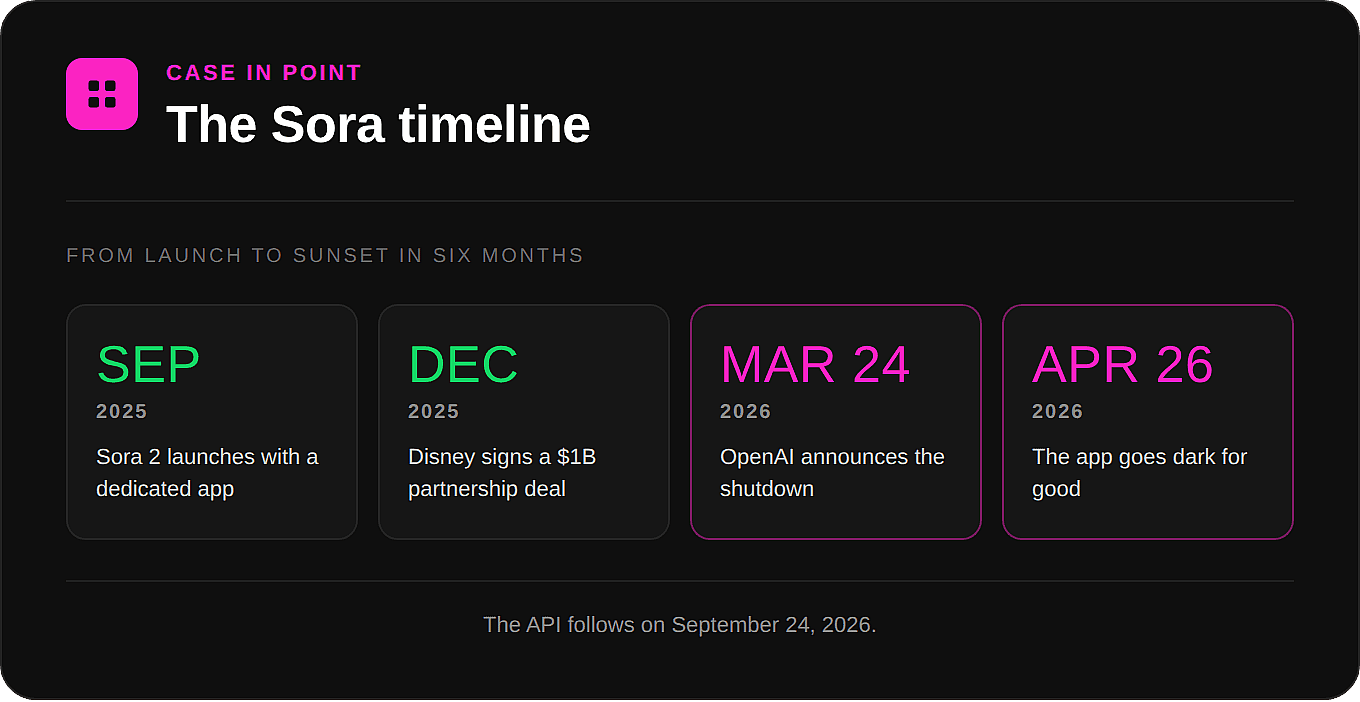

On March 24, OpenAI announced it was shutting down Sora. The app goes dark on April 26. The API follows in September. The billion-dollar Disney partnership signed back in December dissolved along with it, and according to reporting, Disney found out less than an hour before the rest of the world did.

If your creative workflow lived inside Sora, you have a few weeks to export your work and figure out what's next. That's the kind of timeline that turns an abstract debate about AI strategy into a very concrete problem.

It also points at a question worth thinking through carefully: in a field this volatile, what's the right way to build a creative practice on top of AI tools? It's a question OpenArt has been building a particular answer to from the start. Before getting to that answer, though, it's worth looking at why the question keeps getting harder to avoid.

The landscape isn't going to settle down

There's a natural instinct, when something is moving fast, to wait for it to slow down. To pick the eventual winner once the dust clears. It's a reasonable instinct, but it doesn't really match what's happening in AI image and video generation right now.

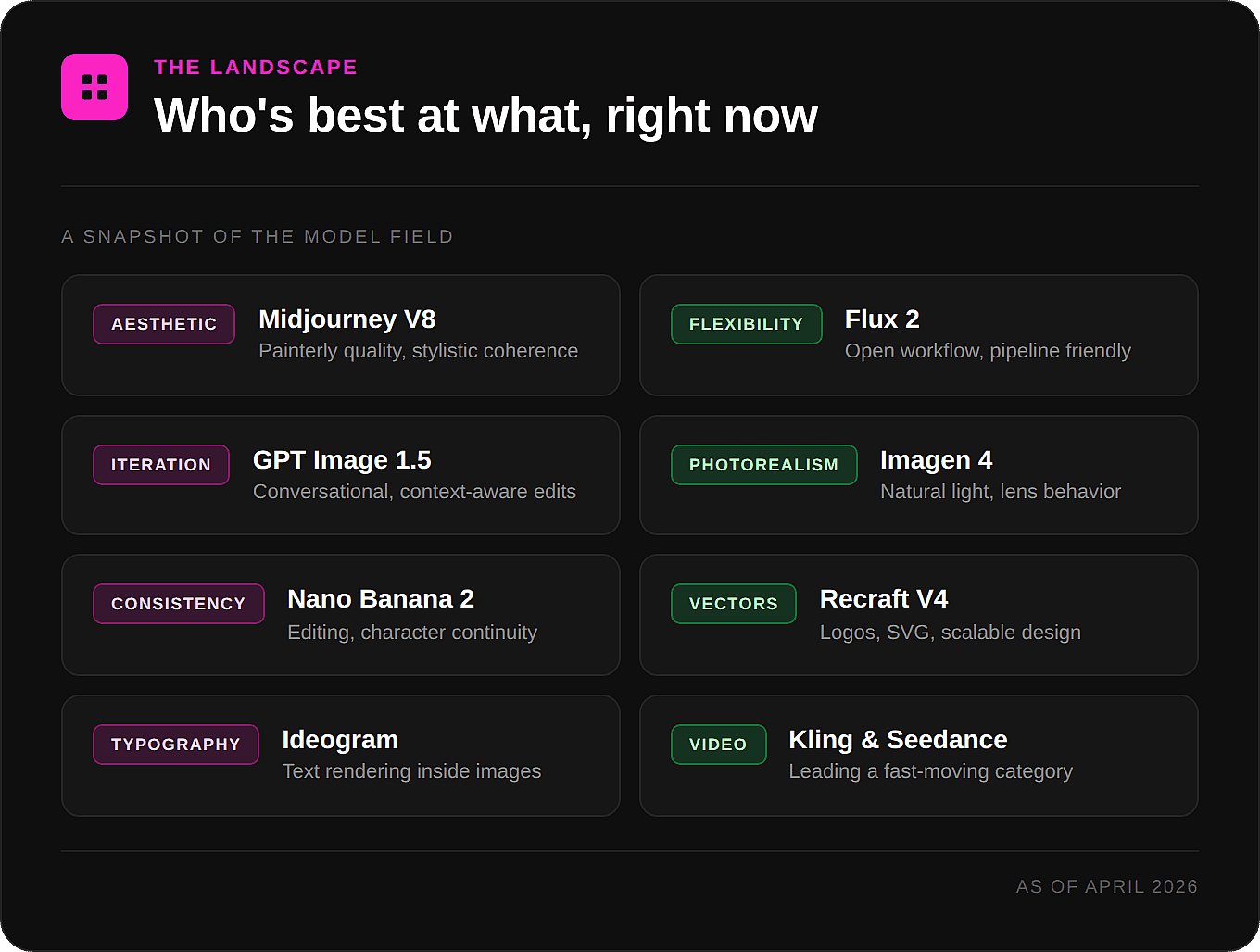

The pace isn't slowing. If anything, it's picking up. In just the last few months, Midjourney shipped V8 with a rewritten engine and native 2K output. Black Forest Labs released Flux 2. Google's Nano Banana 2 became one of the fastest image models available. Imagen 4 raised the bar for photorealism again. Recraft V4 took over as the model people reach for when they need vectors. Ideogram keeps leading on text rendering. Kling and Seedance are pushing video forward on a near-monthly cadence. And Sora, which a year ago looked like the future of AI video, is being sunset.

The pattern isn't a market consolidating around a few stable winners. It's a research frontier where the leader changes regularly, capabilities leapfrog each other constantly, and the economics of running these models are demanding enough that even well-funded labs are making hard tradeoffs. Sora wasn't shut down because it was bad. By most accounts the underlying model is impressive. It was shut down because video generation is enormously expensive to run, the user numbers didn't justify the compute, and OpenAI needed those resources elsewhere. That same math applies, in some form, to almost every model in the space.

No single model is best at everything

Once you're working with this landscape regularly, the question of "which model is best" starts to feel like the wrong question. Best for what?

A rough sketch of where things stand right now:

Midjourney continues to set the bar for aesthetic quality and stylistic coherence. If you want an image that looks beautiful as soon as it generates, very little touches it. The tradeoff is a closed ecosystem, with no real API, limited integration into broader workflows, and a Discord-and-subscription model that doesn't fit every use case.

Flux 2 is where a lot of the open-source workflow community has landed. It's flexible, controllable, and plays well with the broader ecosystem of fine-tuning, LoRAs, and pipeline tools. If you need to chain things together or build something custom, it's hard to beat.

GPT Image 1.5 is built around iteration. The conversational interface means you can refine an image the way you'd talk to a collaborator. Make this part warmer, move that element, try it again with a different background. The model holds context across the whole conversation, and that iteration loop is the actual product.

Google Imagen 4 and Nano Banana 2 are doing remarkable work on photorealism and speed. Nano Banana 2 in particular has become a go-to for editing and character consistency, which is the kind of unglamorous infrastructure problem that determines whether you can finish a project or just generate impressive one-offs.

Recraft V4 is the model to reach for when you need vectors, logos, or designs that need to scale cleanly.

Ideogram still leads on text rendering, which matters more than people expect once you start trying to put words inside images.

Kling and Seedance are leading the video conversation right now, with new versions landing on a pace that makes any "best video model" claim feel temporary by definition.

The takeaway isn't that one of these is the right answer. It's that a photographer, a concept artist, a small business owner making product mockups, and a filmmaker storyboarding a scene all need different things, and the model that's best for one of them is rarely the best for the others.

The cost of locking in

When you commit your creative process to a single model, you take on more than you might realize. You inherit its limitations, its pricing changes, its quality regressions when a new version ships, and its risks if the company behind it changes direction. You lose flexibility to take advantage of whatever launches next month. And you take on the kind of tail risk that, until very recently, a lot of people were treating as theoretical.

The Sora shutdown is the loudest example so far, but it isn't the only one. Models get deprecated. Pricing changes. APIs disappear. Quality sometimes shifts in ways that break workflows that were working fine the day before. None of this means any individual model is a bad choice. It just means that treating any one model as the foundation of a practice is more fragile than it looks.

The bet OpenArt made

The alternative to locking in isn't dramatic. It's just to treat AI models the way photographers treat lenses, or the way musicians treat instruments. You learn what each one is good for, you reach for the right one when the work calls for it, and you don't get too attached to any single one of them.

That's the premise OpenArt was built on. Not "we have more models than the other guys" as a feature checklist, but a position about where the power in a creative practice should sit: with the person making the work, not with whichever lab happens to be in the lead this quarter. The Suite is designed so that the right model for the job is one click away, rather than another subscription, another interface, and another file format to wrangle. When a new model launches, it shows up in the workflow you're already using. When a model shuts down, and more will, the work moves with you instead of getting stranded.

At OpenArt we believe that creators shouldn't have to re-platform every time the landscape shifts, and that the job of a creative tool is to protect the work from that kind of churn, not pass it through. There are other ways to stay flexible, of course. You can build your own multi-tool workflow across separate platforms, and plenty of people do. The thing worth holding onto, whichever route you take, is that some version of flexibility is increasingly the only sensible approach.

A few practical principles

If you're trying to figure out how to think about this for your own work, a few things that have held up well so far:

Match the model to the output, not the other way around. If you find yourself reshaping your idea to fit what one model can do, that's a signal worth paying attention to.

Keep your work portable. Prompts, reference libraries, decisions, and exports are all easier to move if you build them to be moved from the start.

Stay curious about new models, especially the ones outside whatever you're already using. The model that's best for your work today probably isn't the one that'll be best six months from now, and that's worth being excited about, not anxious about.

And don't confuse familiarity with the right fit. Sticking with a model because you know it well is different from sticking with it because it's the best one for the job.

Where this leaves us

The multi-model era isn't really a forecast at this point, it's just a description of the present. Sora's shutdown is a sharp reminder of why flexibility matters, but it isn't the first reminder and it won't be the last. The creators and teams who'll do well in this period are probably the ones who treat AI models as a toolkit rather than a commitment, who pay attention to what each model is actually good at, and who let their workflows evolve as the landscape does.

That's the bet OpenArt is built around, and it's the bet we'll keep making every time another model rises, falls, or rewrites the rules. None of this requires abandoning the models you love, it just means holding them a little more loosely, and leaving room for whatever comes next.